🌊 Drift Detection in Robust Machine Learning Systems

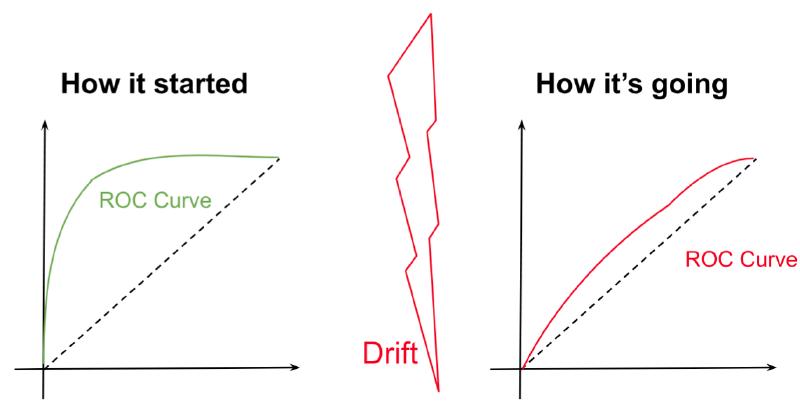

Your model works perfectly in staging. Goes to production. Six months later… it silently fails. The reason? Drift: the data changed, but the model didn’t.

📊 Two types of drift:

- 📉 Data drift: the feature distribution changes. Doesn’t always degrade the model, but it’s an early warning.

- 🎯 Concept drift: the relationship between features and target changes. Almost always degrades performance.

🔬 How to detect it:

- K-S test, PSI, Chi-Squared → univariate distribution checks

- Autoencoders → multivariate drift (when individual features don’t show it)

🛡️ How to fight it:

- Robust feature selection

- Fallback plan ready before deployment

- Retraining with continual learning

💡 Quick explanation

Imagine training a spam detection model in 2020. By 2026, spammers use entirely new techniques. Your model is still looking at past patterns. That’s drift. Early detection is the difference between a resilient system and one that fails without anyone seeing it coming.

More information at the link 👇

Also published on LinkedIn.