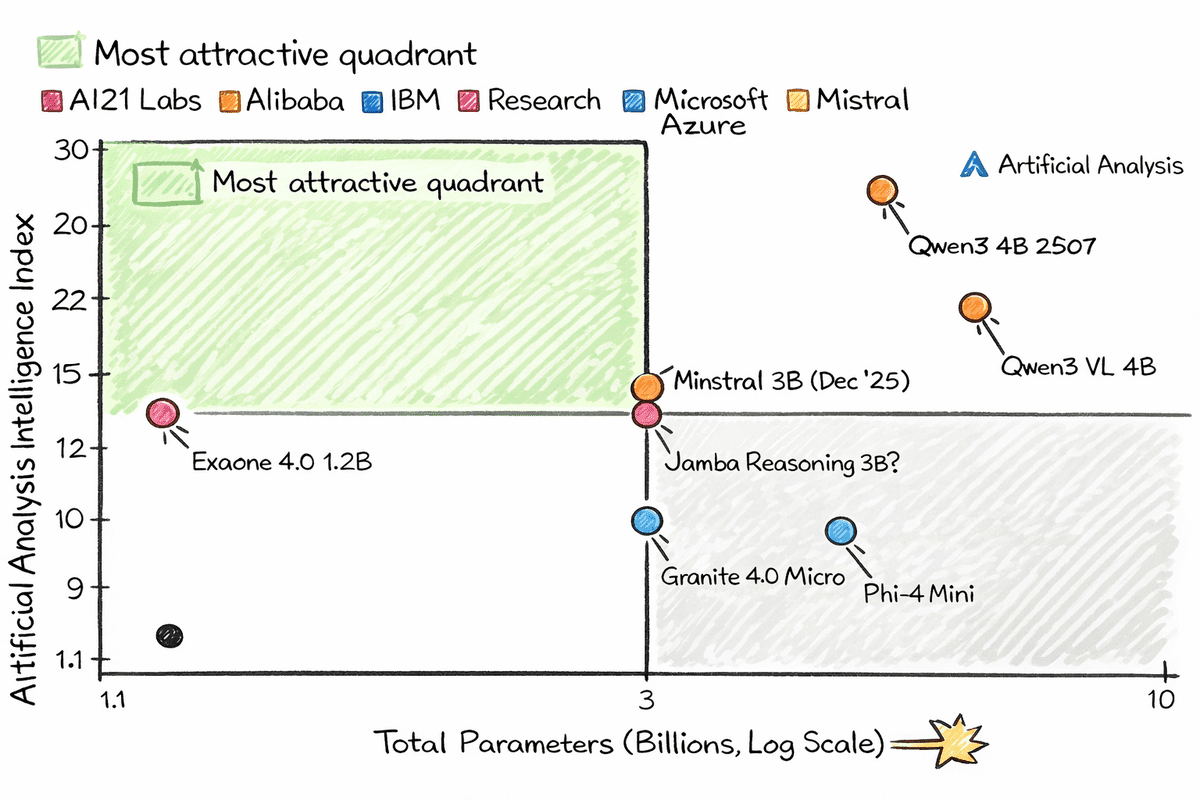

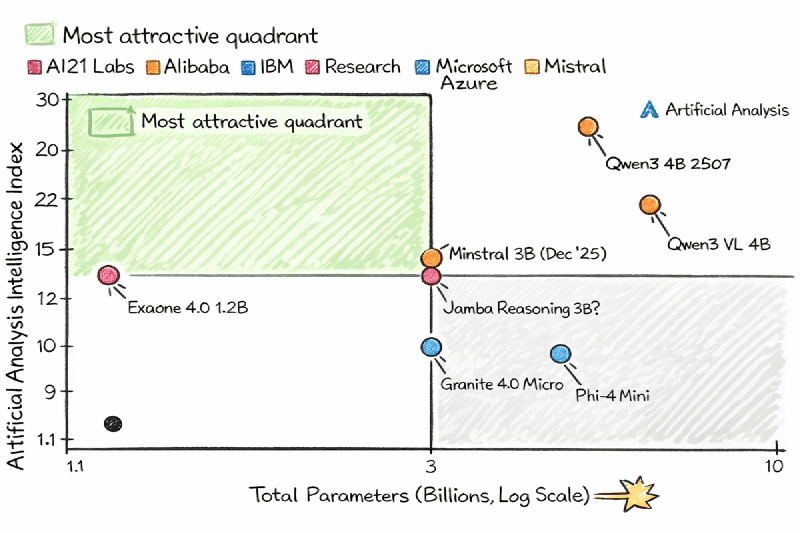

🍓 7 Tiny AI Models That Run on a Raspberry Pi

Thanks to quantization and modern architectures, AI no longer requires expensive servers. These 7 models with 1 to 4B parameters prove that small doesn’t mean weak. All you need is llama.cpp and a quantized model from Hugging Face.

🤖 The models:

- 🥇 Qwen3 4B 2507 — Reasoning, math, code, tool calling, 256K context. Most recommended.

- 👁️ Qwen3 VL 4B — Multimodal with vision: images, video, and long text. Can act as an autonomous UI agent.

- 🌍 EXAONE 4.0 1.2B — Just 1.2B params, reasoning mode, supports English, Korean, and Spanish.

- 🔧 Ministral 3B — From Mistral AI, vision + structured JSON outputs.

- 🧠 Jamba 3B — Hybrid Transformer-Mamba architecture, high efficiency, 256K context.

- 🏢 Granite 4.0 Micro — IBM, enterprise-focused, RAG and function calling, Apache 2.0.

- 💎 Phi-4 Mini — Microsoft, 3.8B params, high-quality synthetic training data, 128K context.

💡 Quick explanation

Quantization “compresses” AI models by reducing number precision (from 32-bit to 4 or 8-bit floats). A model that would need 16GB RAM can fit into a Raspberry Pi’s 4GB, with minimal quality loss. That’s edge AI, literally!

More information at the link 👇

Also published on LinkedIn.