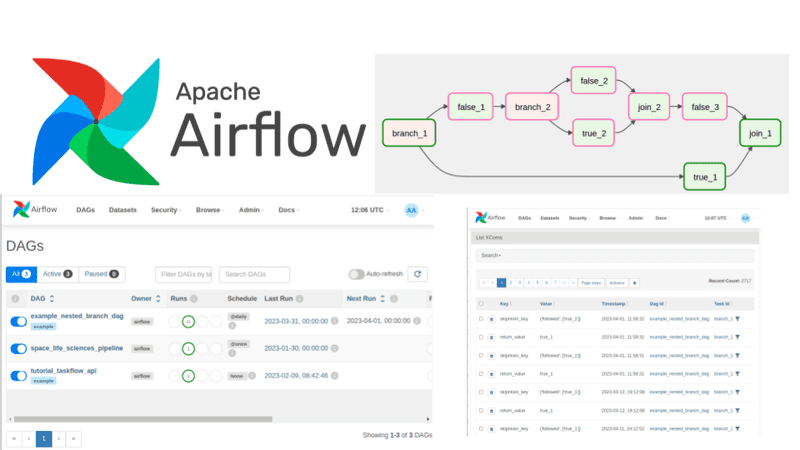

🚀 Apache Airflow: orchestration of workflows for data teams#

Apache Airflow is an open-source platform that allows you to create, schedule, and monitor complex workflows using only Python. Ideal for data pipelines, machine learning, automation, and cloud operations.

🔑 Key points#

- 🧩 Scalable — modular architecture coordinating multiple workers.

- 🐍 Pure Python — define your pipelines as code, no cryptic configuration.

- 🔄 Dynamic — generate tasks and DAGs programmatically.

- 🧱 Extensible — build custom operators and connect to GCP, AWS, Azure, and more.

- 🖥️ Modern UI — clear monitoring, accessible logs, and visual task management.

- 🌐 Open Source — active community, continuous improvements, and open contributions.

🧠 Explanation in a nutshell#

Imagine you have many tasks that need to run in a certain order: move data, train a model, send a report, etc.

Airflow acts like a conductor:

- it decides which task goes first,

- controls when it runs,

- checks if it succeeded,

- and lets you automate everything without manual intervention.

If you know Python, you can build these flows as if writing any script, but with superpowers to schedule them, monitor them, and scale.

More information at the link 👇

Also published on LinkedIn.